Why AI agents will expose the same adoption problem PLM has always had

PLM Adoption Was Always Harder Than the Software Story

For as long as I can remember, adoption was one of the biggest hidden problems in PLM.

The industry usually preferred to speak about functionality, architecture, workflows, governance, integration, and control. Those things mattered, of course. But underneath all of that was a much simpler and much more stubborn reality: many users still found it easier to dump things into Excel.

That behavior was often explained away as resistance to change or a lack of discipline. I think that was always too simplistic. In many cases, Excel was not just a workaround. It was a signal. It signaled that the formal system did not fully match the way work actually happened. People relied on spreadsheets, email, shared folders, and side conversations not only because they were attached to old habits, but because those tools gave them room to handle exceptions, ambiguity, and local decisions in ways enterprise systems often did not.

At a higher level, companies tried to solve this with organizational change management, executive sponsorship, consulting programs, and long transformation initiatives. That was real too. PLM was never just a software rollout. It was always an attempt to reshape how an organization works, collaborates, and makes decisions. So both sides of the problem existed at the same time. At the local level, users escaped into familiar tools because they needed something easier and more adaptive. At the strategic level, leadership realized that successful PLM adoption required organizational alignment that was much harder than deploying software.

That is the backdrop for today’s discussion about AI agents.

From SaaSification to Agentification: A Familiar Promise

We are now entering a new wave. The industry is increasingly talking about AI agents and, in some circles, the “agentification of PLM.”

The phrase itself is worth pausing on. It follows a pattern the PLM world has seen before. A few years ago, the answer was SaaSification. Moving PLM to the cloud, the argument went, would remove deployment friction, lower costs, and accelerate adoption. That shift was real and meaningful. But it did not solve the adoption problem. Organizations that had not defined their work clearly enough for an on-premise system still had not defined it clearly enough for a cloud system. The technology changed. The underlying challenge did not.

Agentification follows the same logic. Building an agent is becoming easier every month. Deploying one is a shrinking technical problem. But the hard question remains identical to the one PLM vendors have faced for twenty years: how does the technology connect to the actual way people work?

I believe agents are important, and I believe they can become genuinely useful in engineering and manufacturing. But there is a prerequisite nobody in this wave is talking about clearly enough.

The hard problem is not building an agent.

The hard problem is figuring out what you actually do.

The “Now What” Problem: When AI Agents Meet Real PLM Work

A recent video by Nate — focused on AI agents in general, not on PLM specifically — identified something I think every company thinking about implementing PLM and building their AI strategy should watch carefully. He tracked hundreds of agent deployments and found a consistent pattern. Installation is no longer the problem. The most common message in every agent community after a successful setup is three words: “Okay… now what?”

That is not a technical support question. It is what happens when you sit down to delegate work and realize you have never described your own operating logic in the resolution anything else can act on.

In PLM environments, this moment has a very specific shape.

Consider the BOM release. Every organization has a process for it. Most have a workflow configured in a system. But the real operating logic for releasing a BOM is not fully captured in any workflow diagram. Each organization has its own specific set of rules and tacit knowledge about what tasks need to be performed for their specific product to be considered release-ready. One company requires that every make part has a confirmed manufacturing routing before the BOM can move. Another requires sourcing sign-off on every new supplier introduction, even at the prototype stage. A third has a different definition of completeness for hardware versus software components in an embedded product. These rules are real. They are enforced daily. And they exist almost entirely in the heads of the people who have been doing this work for years.

Now put an agent on top of that BOM release process. The agent has access to the data. The agent can see the workflow states. But the agent does not know what “complete” means in this organization, for this product, at this stage of the program. Nobody has written it down in a form the agent can use. The result is the same “now what” moment Nate describes: the system is running, but it does not know how to act, because the operational knowledge that would tell it what to do has never been made explicit.

Capturing those BOM review rules is not a data problem. It is not a workflow configuration problem. It is a work-understanding problem. And it is exactly the prerequisite that gets skipped when companies approach “agentification of PLM” as a technology initiative.

Why AI Agents Run Into the Same PLM Adoption Wall

This is where the connection deepens.

PLM adoption has always struggled with the distance between formal process and actual behavior. Old habits die hard. Systems were designed to represent product structures, revisions, workflows, approvals, and controlled handoffs. But organizations do not run only on formal process. They also run on tacit decisions, undocumented exceptions, workarounds, local coordination, shortcuts, habits, judgment calls, and informal sequencing of tasks across teams.

These things are not accidental. They are often how work actually gets done. That means AI agents are not escaping the PLM adoption problem. They are running directly into the same wall.

If an organization never made its work explicit enough for people to use the system naturally, it is very unlikely that an agent will magically understand it. If engineering, manufacturing, sourcing, and operations still depend on unwritten rules, spreadsheets, folder conventions, and side-channel communication, then an agent placed on top of that environment will inherit the ambiguity. It may look more modern. The underlying problem will still be there.

An agent on top of an unclear process is not transformation. It is automation of ambiguity.

Tacit Knowledge: The Hidden Layer AI Agents Cannot See

The deeper mechanism is worth understanding, because it explains why work-definition is so hard, not just inconvenient.

The more senior and experienced a knowledge worker becomes, the more their work shifts from explicit process to tacit judgment. That is not a flaw. It is how expertise develops. Over time, people stop consciously following each step and start operating through compressed intuition. They know what to check, what to compare, who to involve, and when something feels wrong, but they often cannot describe those things step by step because they no longer think that way.

This is very familiar in PLM environments. A strong engineering manager does not simply follow the workflow. A manufacturing planner does not just move data between stages. A change coordinator does not merely route an ECO. These people are constantly making small but important judgments based on context that is rarely written down.

They know which BOM issue will create downstream disruption. They know which supplier substitution is actually risky regardless of what the approved vendor list says. They know which file version matters when two exist with nearly identical names. They know which change request looks routine on paper but will become urgent in practice. They know when a formal process is enough and when a real conversation needs to happen before anything can move forward.

That knowledge is valuable. It is also exactly the kind of knowledge that is hardest to delegate, to another person or to an agent.

What to Capture Before You Deploy AI Agents in PLM

Before a company begins its agentification work, it should ask some uncomfortable but necessary questions.

What decisions are people making every day that are not reflected in the system? Where do handoffs still rely on human memory, spreadsheets, and side conversations? Which recurring tasks are simple on paper but messy in reality? What information do people always need before they can trust a result? Where does context get lost between design, BOMs, sourcing, manufacturing, and change processes?

These questions matter because an agent does not only need access to information. It needs operational context. It needs to know what counts as meaningful, what counts as complete, what should be escalated, what can be handled automatically, and when an exception is normal versus dangerous.

The practical starting point is more concrete than it might sound. Review the actual working processes in your organization. Not the official process documentation, but the real tasks people perform every day. Map engineering tasks and current workflows, including the manual steps, the offline decisions, and the places where someone always does something the system does not capture. Create a mental map of how work actually flows. That map is the foundation for any useful agent work, and it is the step that most “agentification” conversations skip entirely.

I am working on prompts and tools to help with exactly this kind of structured work elicitation, and I will be sharing them soon. The point for now is that this step cannot be outsourced to the agent itself. It requires the organization to look at its own work honestly.

How OpenBOM Approaches AI Agents in Engineering

This is also where I want to explain how we approach it at OpenBOM.

Our view is that the future of AI agents in engineering should not begin with a fantasy of PLM intelligence that suddenly understands the whole company and the work everyone does. That sounds attractive in presentations, but it skips over the hardest part. We believe the better path starts with very fundamental and basic work tasks, the activities users already perform every day, where friction is real, value is immediate, and context can begin to accumulate naturally.

Instead of starting from the assumption that AI will somehow automate the whole lifecycle, we think it makes more sense to begin with focused assistance around the core elements of engineering work. Help users with what they are already doing. Reduce friction in daily tasks. Capture context in the flow of real activity. Build structured understanding from the bottom up.

That is, in my view, a much more realistic and much more adoptable strategy. Because if adoption was always the hidden challenge of PLM, then AI should begin where adoption can actually happen: in daily work.

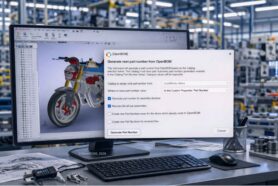

Why CAD Files Are Still the Core of Engineering Work

That is one of the reasons CAD File Agent is such an important part of our strategy, and it is worth explaining why files specifically.

Even after more than a decade of cloudification, SaaSification, and every other technology transformation the industry has attempted, files remain one of the most fundamental elements of engineering work. CAD files, drawings, assemblies, and related design documents are still the primary output of engineering activity. They are where product intent is created, communicated, revised, and handed off. And the practical reality for the vast majority of engineering organizations today is that these files are still sitting on local computers, shared drives, and outdated CAD Vaults. They are not organized in the clean, accessible, structured way that transformation roadmaps assume. They are the accumulated product of real daily work, with all the inconsistency, redundancy, and missing context that implies.

This is not a niche observation about a corner case. It is the baseline condition of most engineering organizations in the market. Files are where work begins. Files are also where context is most often lost.

If an agent can help engineers with files in a useful and natural way, helping them organize, find, understand, and work with design files in the flow of their actual tasks, that creates immediate value. But more importantly, it creates a foundation. It begins capturing the context around one of the most basic elements of product creation. It starts from work people already do, rather than from an abstract promise that the system will somehow reason over the whole lifecycle from day one.

The first useful agent is not the one that claims to run PLM. It is the one that helps people with the real work they already do every day.

From Daily Task Assistance to Operational Product Memory

This bottom-up approach matters for adoption in a deeper way than it might first appear.

PLM often struggled because organizations tried to impose a complete formal structure before the software had earned trust in daily work. Agents could repeat the same mistake if they are introduced first as a grand automation strategy rather than as something that helps people with the practical tasks that already fill their day.

Successful agent adoption will probably look much less like a revolution and much more like a gradual layering of useful assistance on top of real activity. First, help with a task. Then, capture the context around that task. Then, identify the recurring decisions, dependencies, and patterns. Then, build from assistance toward something that begins to resemble operational product memory.

That is a much more believable path than assuming an agent can arrive fully formed and instantly understand how a company functions.

A More Realistic View of AI Agents in PLM

AI agents may become a forcing function for a much older problem. They may finally push organizations to confront something PLM has been wrestling with for decades: the difference between what the process diagram says and what people actually do.

And maybe that is the most valuable part of this whole trend. Not that agents will remove the need to understand work, but that they will expose how necessary that understanding always was.

So when I hear “agentification of PLM,” I do not dismiss it. But I also do not see it as a magic answer. The first question is not how many agents you can deploy. The first question is whether your organization understands its own work well enough to guide them.

If the answer is no, then the first step is not automation. It is observation. It is making hidden operating logic visible. It is understanding the real tasks, decisions, dependencies, and friction points that shape product work every day.

Only after that does the agent become truly useful.

Conclusion

PLM adoption was always a work problem disguised as a software problem.

AI agents are now revealing the same truth in a new form.

The companies that benefit most from this next wave will not be the ones with the biggest demos or the loudest claims. They will be the ones that take the time to understand how their work actually happens and build agents from that foundation.

Before you agentify PLM, understand the work.

REGISTER FOR FREE and check how OpenBOM can help.

If you use SOLIDWORKS, talk to us about CAD FIle Agent for SOLIDWORKS.

Best, Oleg

Join our newsletter to receive a weekly portion of news, articles, and tips about OpenBOM and our community.