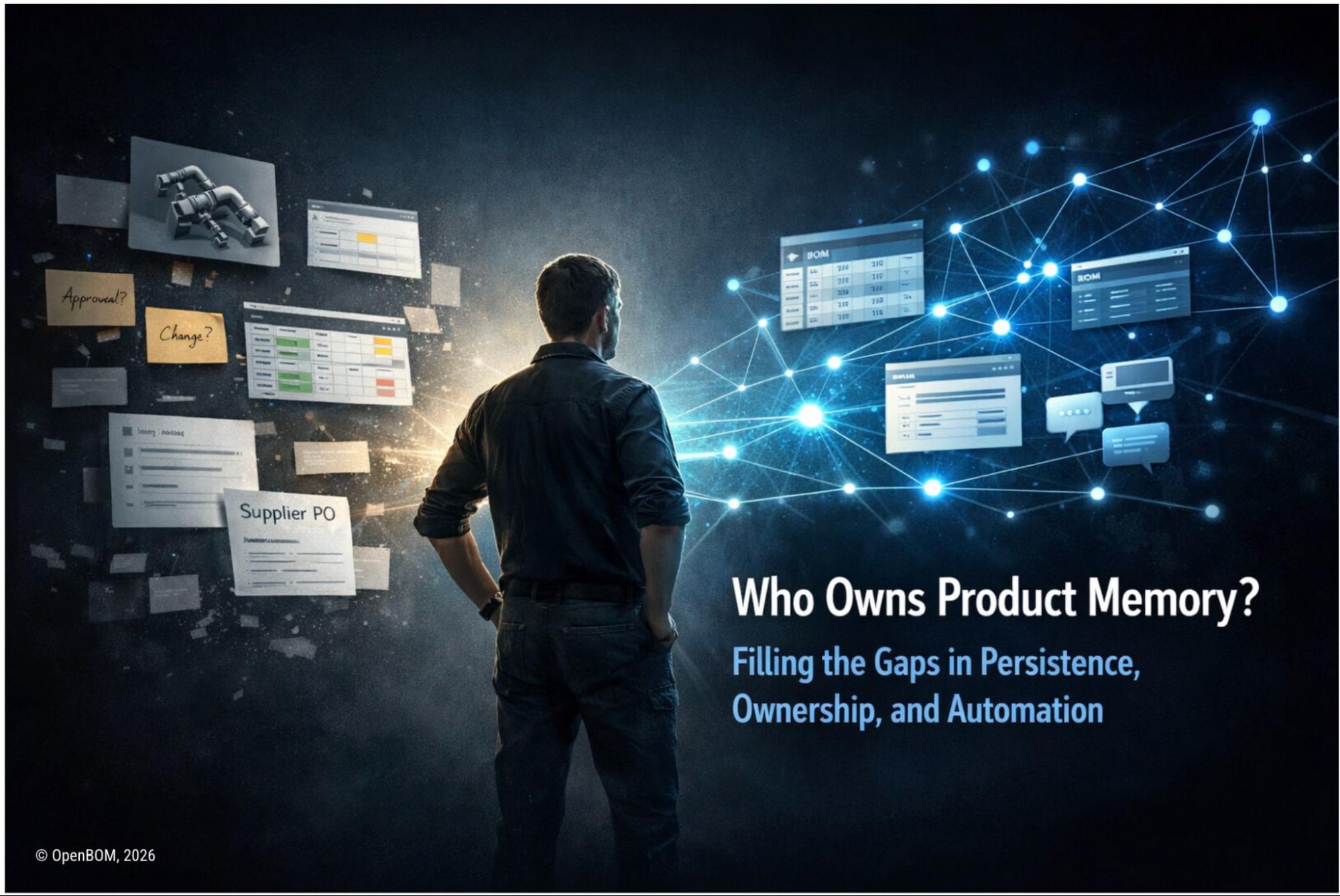

After I introduced the idea of Product Memory, one question kept coming up in almost every conversation: “Who actually owns it — and where does it live?”

It’s a fair thing to ask. Because if Product Memory ends up being just another system of record, another database that someone controls, then we haven’t learned much from the last two decades of PLM.

And honestly, I think that’s part of why the question feels loaded. We’ve spent years thinking about product data in a very specific way. There must be a system. That system must have a database. That database must be the “single source of truth.” And some vendor must own it, define it, and control who gets access.

So when people hear “Product Memory,” they try to map it into that same model. Where’s the database? Which system owns it? Who manages access?

But that’s exactly the wrong way to think about it. Product Memory is not a single system, and it doesn’t live in one place. It is collected, organized, and evolves to help companies understand how things come together and why decisions were made.

Why Traditional PLM Systems Struggle with Product Data Ownership

The original ambition behind PLM was actually pretty compelling. The idea was a digital backbone for product information — from early design all the way through manufacturing and service. Everything connected. Everything traceable. Available when you need it.

In practice, that vision narrowed considerably.

PLM became a system of record. A place to store, structure, and control data. A central database that defines what’s “official,” backed by governance, permissions, workflows, and a substantial amount of ongoing effort just to keep it current.

That shift wasn’t random. Technology constraints pushed it in that direction. So did enterprise IT patterns and, frankly, business models. If you own the data, you can monetize access. You can enforce structure. You can sell control.

But there was a price. Data fragmented across systems. Engineering lived in CAD and spreadsheets. Manufacturing worked in ERP. Procurement had its own tools. Communication went through email, Slack, Teams. And PLM, ironically, often ended up being just another silo rather than the bridge it was supposed to be.

We ended up with multiple “sources of truth,” each incomplete in its own way. More importantly, we lost something less obvious. We lost the ability to remember how things actually came together and WHY did we make the change.

What Is Product Memory? A New Model Beyond CAD files, PLM Systems of Record, And Product Data Sources

When I talk about Product Memory, I’m not describing a replacement for PLM, ERP, or CAD. I’m describing a different way to think about how product knowledge exists and evolves. Product Memory doesn’t live in a single database. It’s a layer that connects information across systems.

The CAD model stays in CAD. The BOM might live in a BOM tool or ERP. Documents are stored somewhere else. Conversations happen in Slack or email. Decisions get made in meetings, in comment threads, in quick back-and-forths that never make it into any formal system.

All of that is part of the product. But none of it, on its own, is the memory. The memory is in the connections — how these pieces relate to each other, how they change over time, and the context that explains not just what changed, but why.

So when people ask “where does Product Memory live?” they’re asking the wrong question. It’s a bit like asking where your memory lives. It’s not a single file. It’s a network of associations.

The same is true here.

How Product Memory Is Stored: Distributed Data Models and System Topology

This is where things get concrete, because eventually people want to know how this actually gets persisted. The honest answer isn’t “it depends,” even though in practice it does. The better answer is that Product Memory follows a distributed topology.

Some of it is embedded in existing systems. Some is captured through workflows. Some might exist in a dedicated layer that models relationships across systems and connects them explicitly.

In one company, the memory might be implicit in how CAD files reference each other. In another, it’s captured through a change process. In a third, it’s represented as a graph of items, relationships, and events.

None of these alone is the memory. They are fragments of it. Product Memory emerges when those fragments are connected. Which brings us back to ownership.

Who Builds Product Memory? Vendors, Companies, and AI Agents Explained

Product Memory obviously keeps the data in database and storage. What is stored and the implementation of a product memory can be different and dependent on the use case, company infrastructure. The underlying data gets constructed through the interaction of three different forces.

The first is infrastructure. Platforms provide the ability to connect data, define relationships, and make information usable. They create the foundation on which memory can exist.

The second is the company itself. Every organization defines its own meaning. What counts as a part? What does a release mean? What matters in a BOM? What constitutes a real change? These definitions aren’t universal — they’re shaped by the business, the products, and the people running them.

The third is something newer: agents. Not integrations or scripts in the traditional sense, but increasingly capable mechanisms that can observe, interpret, and organize data as it flows through the system — detecting patterns, inferring relationships, capturing context without requiring someone to manually enter it.

Product Memory isn’t installed once. It’s continuously built by these three elements working together. That construction process becomes clearer when you look at what Product Memory actually does.

Product Memory as a Gap-Filling Layer in Engineering and Manufacturing Systems

The simplest way I’ve found to explain Product Memory is this: it fills the gaps that existing systems leave behind. Every system is good at something.

CAD handles geometry. ERP manages transactions. PLM controls structured documents and changes. Communication tools capture conversations. But none of them captures the full picture. And each one ignores things the others handle.

In some companies, the gap is structural — there’s no consistent way to define a product across tools. Files are disconnected. BOMs live in spreadsheets. Data is scattered across drives and inboxes.

In others, the gap is contextual — the structure exists, but the meaning is missing. Decisions aren’t captured. Relationships between systems are fuzzy. Changes propagate in ways that are hard to trace after the fact.

Different environments, different problems. But the same underlying issue. Product Memory is what starts to fill those blind spots.

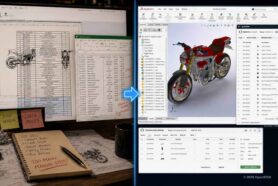

How Product Memory Organizes Engineering Data (SolidWorks, Excel, and BOM Challenges)

Consider a typical engineering or manufacturing team.

They use SolidWorks for design. BOMs are managed in Excel. Procurement happens through email or a lightweight ERP. Communication flows through Slack threads. Files live in folders — sometimes organized, sometimes not.

There’s no single place where the product is defined. Or rather, there are many places, each with a partial view.

The problem isn’t too many systems. It’s the absence of structure across them.

Parts aren’t consistently defined. Assemblies aren’t always reflected in BOMs. Supplier information lives somewhere separate. Changes happen, but not in any way that’s reliably captured.

This is a structural gap. Product Memory, in this case, starts by organizing what already exists.

Infrastructure provides a way to define items, connect CAD data to BOMs, and establish relationships between parts, assemblies, and suppliers — without replacing the tools engineers are already using. It connects them.

The company defines what matters. What counts as a part. How a BOM should be structured. What information needs to be tracked.

And then agents start doing something that wasn’t really possible before.

They scan file structures. Extract BOMs from CAD. Detect relationships between components. Identify duplicates. Bring spreadsheet data into a structured form. Watch changes over time.

Gradually, what was unstructured becomes structured — not because someone manually entered everything into a system, but because the system learned to organize what was already there.

Nothing got replaced. But something new emerged: a coherent product definition where previously there was fragmentation.

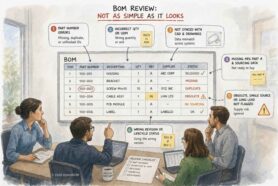

How Product Memory Connects PLM and ERP Systems in Enterprise Environments

Now consider the opposite scenario.

An enterprise company with established PDM/PLM and ERP systems. Structure exists. Processes are defined. Change management workflows are in place. Data is often well-organized within each system.

But even here, something is missing. Engineering and manufacturing views don’t consistently align. Changes transfer from PLM to ERP — but not always completely. Supplier information evolves independently. Decisions get made, but the reasoning rarely gets captured in a way that’s accessible later.

Each system is complete in its own domain. The connections between them are not. This is a contextual gap. Product Memory, in this environment, doesn’t rebuild the systems. It fills what they don’t capture.

Infrastructure acts as a connective layer — linking data between PLM and ERP, modeling relationships that don’t fit neatly into either. It provides a sandboxing for decision and then captures the context that is missing today.

The company defines how these systems should relate. What a complete product view looks like. How engineering, manufacturing, and procurement perspectives are supposed to align.

And agents start observing and interpreting.

They detect inconsistencies between systems. Track how changes propagate. Identify mismatches between engineering and manufacturing BOMs. Infer the impact of supplier changes. Capture the context around decisions — not just the outcome, but the reasoning behind it.

Over time, something shifts. The systems still exist. They still do their jobs.But now there’s a layer that connects them. That explains them. That provides continuity across them. The data was always there. The understanding was not.

From Data Silos to Connected Product Memory: A Unified Pattern Across Systems

These two scenarios look very different on the surface.

One lacks structure. It has CAD files located in multiple places, no revisions, and dependencies. The other use case lacks context. One is fragmented. The other is organized but disconnected.

But the underlying pattern is the same. In both cases, Product Memory emerges by filling what the systems don’t capture. Not by replacing them. Not by centralizing everything into a new database. By connecting, organizing, and interpreting what already exists.

How AI and Automation Enable Product Memory Capture and Intelligence

This brings up the question of how Product Memory actually gets collected. Because if the answer is “people will enter it manually,” then we’re back to the same limitations that PLM has struggled with for years.

Product Memory cannot rely on manual input. It has to be captured automatically. Some of that capture is passive — capture files and changes, updates in systems, events in workflows all generate signals.

Some of it comes through process. Comments, approvals, releases, change orders provide structure and context.

But the most interesting part is what agents can do beyond that. They don’t just record events — they interpret them. They can look at a change and understand what it affects. Connect a decision made in a conversation to a change in a BOM. Detect patterns across projects. Surface insights that would otherwise stay buried.

This is where Product Memory becomes more than a collection of data. It becomes a form of understanding. And without that level of automation, it simply doesn’t work.

From Data Ownership to Product Intelligence: The Future of PLM Business Models

All of this points to a deeper shift — one that goes beyond architecture.

For years, PLM systems were built around the idea of owning data. The system that owns the data defines the structure, controls access, and captures the value.

But if Product Memory is distributed — spanning multiple systems, constructed dynamically through connections and interpretation — then ownership starts to look different.

The value is no longer in controlling the data. It’s in understanding it. In being able to answer questions quickly. Trace decisions. Anticipate issues before they become problems.

The value shifts from storage to intelligence. That has implications not just for technology but for business models. Instead of paying for access to a database, companies start paying for the capacity, ability to make sense of their data, ability to make their decisions. Instead of locking data into a system, they benefit from keeping it connected and accessible. Paying for access should be fine, but it must be open and portable.

This is a genuinely different way of thinking. It’s not fully realized yet. But the direction is becoming clearer.

Conclusion: Who Owns Product Memory in the Age of Connected Product Data?

We can come back to the original question now.

Who owns Product Memory? No single entity does. Vendors provide infrastructure. Companies define meaning. Agents generate and maintain the memory itself. It’s shared, constructed, and continuously evolving.

And maybe that’s the most important shift. Because the question is no longer who owns the data. The real question is who can turn it into understanding. That’s where Product Memory begins.

Interested to talk more about Product Memory and how this architecture can help you?

REGISTER FOR FREE to check OpenBOM and contact our support team.

Best, Oleg

Join our newsletter to receive a weekly portion of news, articles, and tips about OpenBOM and our community.