Product development is accelerating and product complexity kills traditional system architecture. Yesterday, my attention was caught by Martin Eigner’s article about software BOMs and I found it resonating with many strategic assumptions of OpenBOM development and architecture.

Martin’s thesis – Why Software Development Breaks Traditional PLM is how it was framed in the following LinkedIn post combined with a very thoughtful and insightful paper I recommend everyone to read. SBOM was described as the thing that finally breaks traditional BOM. Software behaves differently, development cycles move faster, dependencies multiply, and the neat hierarchical structures that worked so well for mechanical products begin to feel strained. From that perspective, it is easy to conclude that PLM simply cannot keep up with the software world.

I don’t think that is what is actually happening.

Software did not suddenly invalidate PLM, and SBOM did not introduce an entirely new class of problems. What SBOM really did was expose something that had already been changing beneath the surface for quite some time. The role of the BOM had been expanding year after year, absorbing responsibilities that went far beyond its original purpose, until eventually the limits of the model became visible.

The tension we see today is less about software breaking PLM and more about the industry realizing that the product itself is no longer what we assumed it to be.

Software Is Different — but That’s Not the Point

Martin Eigner described this difference in a very precise way when explaining why software development challenges traditional PLM assumptions. Software artifacts do not behave like mechanical parts. They are not frozen objects moving cleanly from one revision to the next. Instead, they evolve through branching, merging, automated builds, and continuous deployment pipelines. A software release is rarely a single thing. It is a composition of code, dependencies, build instructions, configurations, and runtime environments that together define behavior at a specific moment.

Equally important is how traceability works in software. The lifecycle does not stop at release. Requirements connect to tickets, tickets to commits, commits to builds, builds to deployments, and deployments to operational feedback and incident response. The chain remains alive long after the product leaves engineering.

ALM systems exist precisely because of this reality. They are designed to manage process, iteration, and continuous change. PLM systems, by contrast, grew out of a world where the primary challenge was controlling product structure and managing revisions across engineering and manufacturing boundaries.

This difference is real and important. But recognizing that software behaves differently does not yet explain why SBOM feels disruptive. It only explains why software needs its own lifecycle tools.

The more interesting question is why this difference suddenly feels like a structural problem for PLM at all.

Why Does SBOM Feel Like a Disruption for PLM?

The discomfort around SBOM comes from the fact that it answers questions that traditional BOM structures were never designed to address. A classical PLM BOM describes what is assembled, how components relate physically, and which revisions are approved for production. It provides clarity around structure and configuration, which is exactly what engineering and manufacturing needs.

An SBOM, however, operates in a different space. Instead of describing assembly, it describes dependency. Instead of listing explicitly included components, it exposes transitive relationships that may never have been directly selected by an engineer. Instead of remaining stable between releases, it is regenerated as part of the build process, reflecting the current state of software composition.

What makes this uncomfortable is not that SBOM is more complex, but that it forces us to look at the product from a different angle. Hardware BOMs describe different states of what was engineered, planned and built. SBOMs describe what is developed and what is running in the field. Those perspectives overlap, but they are not the same.

When organizations attempt to force SBOM into traditional BOM hierarchies, the friction often happens. The structure begins to feel artificial, and the conclusion often drawn is that software somehow breaks the model. In reality, SBOM simply refuses to hide relationships that were previously implicit or ignored.

At this stage, the conversation typically shifts toward integration strategies. ALM should manage software. PLM should manage product structure. Systems should synchronize through APIs and events. All of that is correct, but it still addresses the symptoms rather than the underlying shift.

Graph Model Moment

The deeper change becomes visible only when we step back and reconsider what the BOM was originally meant to represent. For decades, the BOM functioned as a practical abstraction of the product. It allowed engineering, manufacturing, and procurement to align around multiple structures, which was revision controlled, and for a long time that abstraction was sufficient.

Software challenges this assumption not because it is more complicated, but because it exposes relationships that cannot be flattened into a single hierarchy without losing meaning.

This is where the conversation changes.

SBOM reveals that products are becoming graph-based systems.

Once this idea sinks in, many of the apparent conflicts start to make sense. Software development already treats artifacts as nodes in a graph connected by dependencies, history, and provenance. Electronics increasingly behave the same way. Even mechanical systems, once isolated, now participate in configurations, variants, and lifecycle feedback loops that extend far beyond initial design.

The issue is no longer about fitting software into PLM. It is about recognizing that the product itself has evolved beyond the structural assumptions on which BOM-centric thinking was built.

From Hierarchical Structure to Product Memory

Historically, PLM systems were optimized for stability. The central task was to manage revisions, ensure consistency, and protect the integrity of released configurations. The BOM served as the anchor around which everything else revolved, and as products became more complex, additional responsibilities were layered onto it almost by necessity.

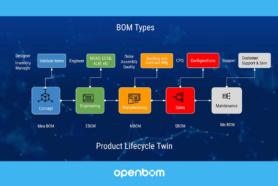

Over time, the BOM became responsible not only for design definition but also for manufacturing planning, cost analysis, supplier coordination, service documentation, and compliance tracking (this is a place where EBOM, MBOM, and other BOMs live). Each addition made sense in isolation, yet collectively they transformed the BOM into something it was never designed to be.

Software did not introduce this overload; it simply accelerated it. Software artifacts are defined by relationships and history rather than containment. Their meaning comes from how they evolved and how they interact at runtime, not from their position in a hierarchy.

Once products begin to accumulate decisions, dependencies, and operational knowledge over time, it becomes more accurate to think of them as memory systems rather than static structures. The product is no longer just what it contains. It is also how it came to be and how it continues to change.

BOMs Become Views of a Product Memory Graph

Seen from this perspective, the proliferation of BOM types suddenly stops looking like a problem. Engineering BOMs, manufacturing BOMs, service BOMs, and now software BOMs are not competing definitions of the product. They are different ways of looking at the same underlying reality.

In a product memory model, the underlying representation is not a single hierarchy but a connected graph of artifacts, relationships, and traceable decisions accumulated across the lifecycle. Each BOM becomes a view optimized for a particular purpose, answering a particular set of questions at a particular moment in time.

The engineering view focuses on design intent. Manufacturing focuses on build strategy and process. Service focuses on maintainability and replacement logic. SBOM focuses on runtime dependencies and risk exposure.

The mistake is assuming that one of these views must be the master representation. SBOM makes that assumption difficult to maintain because its meaning collapses when forced into a purely hierarchical form. It exposes that the hierarchy was never the product itself, only a useful projection.

Why Hierarchical Structures Collapse

Hierarchical structures work best in environments where change is relatively slow and can be identified by a structural process (eg. new revision). Even when revisions occur, the expectation is that structure remains understandable and stable over time (before the revision will be approved).

Continuous change challenges that expectation. Software branching creates parallel histories. Dependencies introduce shared lineage across multiple products. Runtime configurations diverge from build-time assumptions. Security issues require tracing not just what was designed but what was deployed and where.

This is not fundamentally a software problem. It is a consequence of products evolving continuously after release. The lifecycle no longer ends at manufacturing. It extends into operation, monitoring, and ongoing updates.

In such an environment, treating change as a discrete event becomes increasingly insufficient. The context surrounding change becomes as important as the change itself.

GitHub Changed Change Management — Quietly

One of the most significant shifts introduced by modern software development platforms was not technical but conceptual. GitHub changed how engineers collaborate around change by making the discussion and reasoning part of the record itself. A pull request captures intent, alternatives, and trade-offs, turning change into a shared process rather than a final decision.

Traditional PLM workflows evolved differently. Change discussions often happened outside the system, while the system recorded only approvals and outcomes (revisions). That approach made sense when the primary goal was control and traceability at release boundaries.

As products become more interconnected and multidisciplinary, however, the reasoning behind change becomes increasingly valuable. Decisions made early in development resurface years later during service events, audits, or security investigations. Without preserved context, organizations are forced to reconstruct intent from incomplete information.

SBOM highlights this gap because vulnerability management demands answers that cannot be reconstructed after the fact.

ALM and PLM Are Not the End State

The natural conclusion is not that ALM replaces PLM or that PLM must absorb ALM functionality. Each domain has evolved for valid reasons and continues to serve different purposes. ALM manages software process and iteration. PLM governs interdisciplinary product configuration and lifecycle control.

What is changing is the layer beneath both systems. Increasingly, they operate on shared product memory, even when implemented through separate tools. Requirements link across domains, releases reference shared artifacts, and changes propagate through interconnected relationships rather than isolated updates.

Integration, therefore, becomes less about synchronizing data and more about preserving meaning and continuity across the lifecycle.

Why SBOM Arrived First

SBOM became the catalyst for this discussion because it sits closest to operational reality. I can ship a product and later update the software (oops… it never happened before in the electro-mechanical world). When vulnerabilities appear or regulatory requirements demand traceability, ambiguity is no longer acceptable. Organizations must know precisely which components exist in deployed products and how they relate to design and manufacturing decisions.

In this sense, SBOM acts as a stress test for product knowledge. It exposes fragmentation that already existed but could previously be tolerated.

What SBOM forces organizations to confront is not software complexity but the absence of coherent memory across the product lifecycle.

Conclusion: The End of the Traditional PLM BOM Mindset

None of this diminishes the importance of BOM management. PLM BOMs remain essential tools for engineering and manufacturing coordination. What changes is their role. Instead of acting as the singular system of record, they become specialized views into a broader and continuously evolving product definition.

Trying to make one BOM carry every responsibility inevitably leads to complexity and rigidity. Allowing multiple views to coexist over shared product memory restores clarity.

Software did not break PLM, and Software BOM did not invalidate traditional PLM BOM management. What changed is our understanding of what a product actually is.

Modern products are no longer static assemblies. They are evolving systems defined by relationships, dependencies, decisions, and change over time. In that environment, the future is not a better BOM but a better understanding of product memory, where BOMs become views into a living, continuously evolving product.

At OpenBOM we developed a new data management platform to manage product information, integrate processes and systems, connect companies with contractors and suppliers. What to discuss about how we can help you?

Contact us and we would be happy to talk.

Best, Oleg

Join our newsletter to receive a weekly portion of news, articles, and tips about OpenBOM and our community.